I failed to link to this anecdote when it was making the rounds earlier in the year, but this is a legitimately bonkers story about a fun/maddening debugging session (you know it’s a good sign when you’re breaking out strace to diagnose a yarn script). 🚋

Tag: programming

-

Cloudflare: “A steam locomotive from 1993 broke my yarn test”

-

Area 5150 — mindbending IBM 8088 demo

Another from the recent boggling demoscene demos, here’s a bonkers one pulling off tricks on vintage IBM 8088 PC hardware that the 16-color CGA graphics adapter shouldn’t be capable of doing. Remember this is a computer setup from circa 1981!

My favorite part of these kind of demos is when the audience goes wild (well, relatively) for the breakdancing elephant animation, even more than for the psuedo-3D graphics and psychedelic color scanline gimmicks.

-

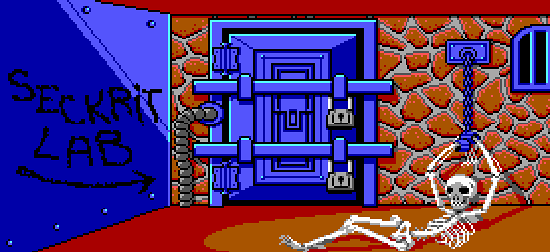

Monkey Island 2 — Talkie Prototype!

I love when people dig up new dirt on my favorite things from 30-ish years ago, in this case a playable prototype of a never-developed “talkie” version of LucasArt’s The Secret of Monkey Island 2: LeChuck’s Revenge. The folks at the venerable MixNMojo site have a good writeup, including a detailed archeology on the differences and new sound resources discovered, along with information and images of LucasArt’s internal debugging tool called Windex (which ran on a second monitor in Hercules monochrome graphics mode!). Neat.

-

Maniac Tentacle Mindbenders: How ScummVM’s unpaid coders kept adventure gaming alive

Nice write-up by Ars Technica on the ScummVM project’s history and developers. Hard to believe it’s been around for over 10 years already! (also, I hadn’t heard that they had a brief-lived controversial build that supported Eric Chahi’s Another World, one of the best games of all time…)

-

Maniac Mansion Disassembled

The Mansion – Technical Aspects

If you love the old Lucasfilm games and want a peek into how their venerable game engine worked from a very technical perspective, you should read this article that walks through a disassembled Maniac Mansion. Extra bonus: Ron Gilbert, the creator of the SCUMM scripting language, drops a lengthy note in the comments section with insider info:

One of the goals I had for the SCUMM system was that non-programers could use it. I wanted SCUMM scripts to look more like movies scripts, so the language got a little too wordy. This goal was never really reached, you always needed to be a programmer. 🙁

Some examples:

actor sandy walk-to 67,8

This is the command that walked an actor to a spot.

actor sandy face-right

actor sandy do-animation reach

walk-actor razor to-object microwave-oven

start-script watch-edna

stop-script

stop-script watch-edna

say-line dave “Don’t be a tuna head.”

say-line selected-kid “I don’t want to use that right now.”I think it’s amazing that they managed to build a script interpreter with preemptive multitasking (game events could happen simultaneously, allowing for multiple ‘actors’ to occupy the same room, the clock in the hallway to function correctly, etc.), clever sprite and scrolling screen management, and fairly non-linear set of puzzles into software originally written for the 8-bit C64 and Apple II era of computers.

-

Yukikaze

-

parchment

Parchment is a JavaScript-powered Z-machine interpreter. Translation: you can now play your Zork and your Leather Goddesses of Phobos (or more modern pieces of interactive fiction) without leaving the comfort of your web browser.

(Via O’Reilly Radar)

-

Akihabara

Nice-looking little HTML5 <canvas> 2D game engine and toolkit written in JavaScript. More and more the apps are moving to the browser and out of the land of plugins and standalone RIA clients.

-

John Balestrieri’s Generative Painting Algorithms

John Balestrieri is tinkering with generative painting algorithms, trying to produce a better automated “photo -> painting” approach. You can see his works in progress on his tinrocket Flickr stream. (Yes, there are existing Photoshop / Painter filters that do similar things, but this one aims to be closer to making human-like decisions, and no, this isn’t in any way suggestive that machine-generated renderings will replace human artists – didn’t we already get over that in the age of photography?)

Whatever the utility, trying to understand the human hand in art through code is a good way to learn a lot about color theory, construction, and visual perception.

(Via Gurney Journey)

-

Magician Marco Tempest Demonstrates a Portable AR Screen

Magician Marco Tempest demonstrates a portable “magic” augmented reality screen. The system uses a laptop, small projector, a PlayStation Eye camera (presumably with the IR filter popped out?), some IR markers to make the canvas frame corner detection possible, Arduino (?), and openFrameworks-based software developed by Zachary Lieberman. I really love this kind of demo – people on the street (especially kids) intuitively understand what’s going on. This work reminds me a lot of Zack Simpson’s Mine-Control projects, especially with the use of cheap commodity hardware for creating a fun spectacle.

(Via Make)