Hey, that’s not a very nice thing to call game developers! Oh, you mean literal slime molds…

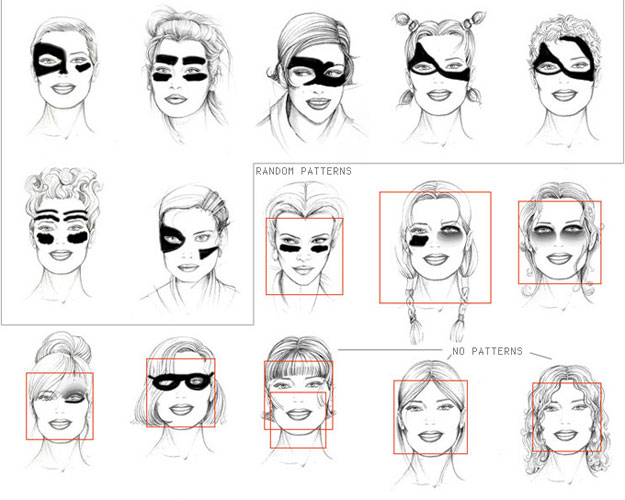

British computer scientists are taking inspiration from slime to help them find ways to calculate the shape of a polygon linking points on a surface. Such calculations are fundamental to creating realistic computer graphics for gaming and animated movies. The quicker the calculations can be done, the smoother and more realistic the graphics. …

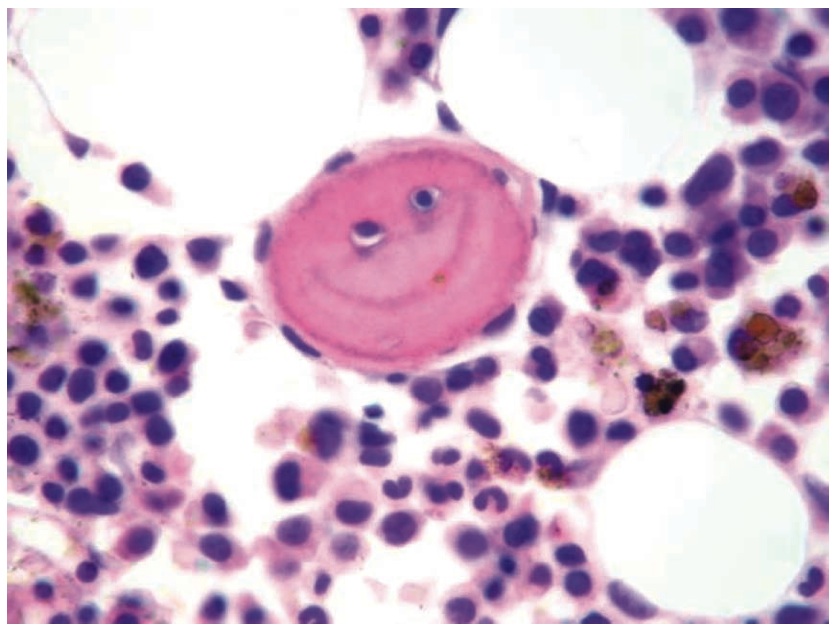

Adamatzky explains that the slime mould Physarum polycephalum has a complicated lifecycle with fruit bodies, spores, and single-cell amoebae, but in its vegetative, plasmodium, stage it is essentially a single cell containing many cell nuclei. The plasmodium can forage for nutrients and extends tube-like appendages to explore its surroundings and absorb food. As is often the case in natural systems, the network of tubes has evolved to be able to quickly and efficiently absorb nutrients while at the same time using minimal resources to do so.

The Internet will some day be a series of (feeding) tubes?