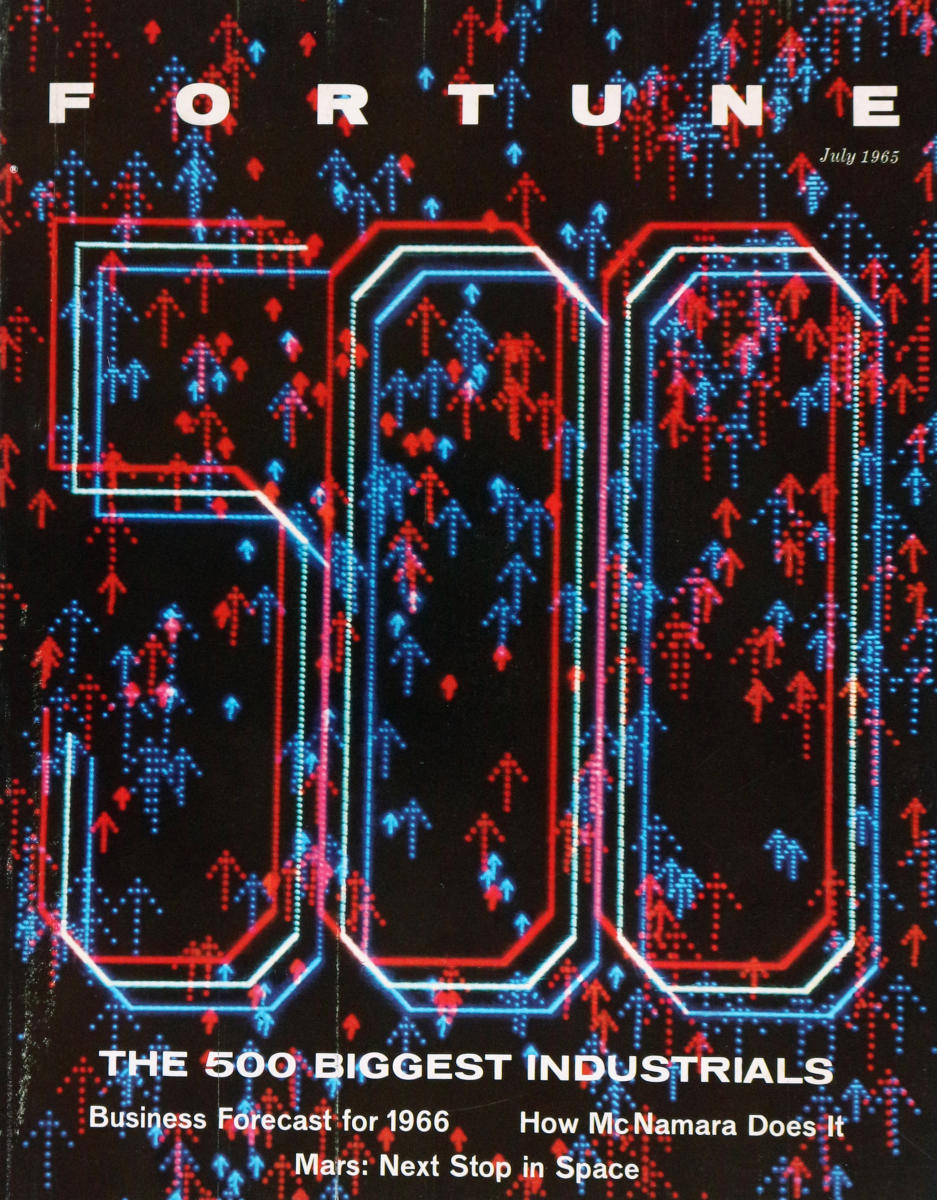

In the current era of concerns about generative AI / LLMs substituting for creative work, this anecdote about the first magazine cover to be generated by a computer (a DEC PDP-1 in this case, which apart from other hacker lore is also remembered as the hardware for the first known video game, Spacewar!) way back in 1965 reminds me that it’s still a pretty old concern that designers will transition to become prompt engineers:

“In the course of events, Fortune’s art director, Walter Allner, might have frowned on filling the column at left with an array of abbreviations and figures, for Allner is no man to waste space on uninspired graphics. But these figures are his special brain children. They are the instructions that told a PDP-1 computer how to generate the design on this month’s cover. This program was ‘written’ to Allner’s specifications and punched into an eight-channel paper tape by Sanford Libman and John Price, whose interest in art and electronics developed at M.I.T.

Generating the design on an oscilloscope and photographing required about three hours of computer time and occupied Price, Allner, and Libman until four one morning. Multiple exposure through two filters added color to the electron tube’s glow. […]

Allner confesses to certain misgivings about teaching the PDP-1 computer too much about Fortune cover design, but adds, philosophically: ‘If the computer puts art directors out of work, I’ll at least have had some on-the-job training as a design-machine programer [sic].’

It’s not mentioned in this article, and it doesn’t look like the choices of color filters and offsets were intentional, but I have to think that this Fortune cover would look pretty amazing through a pair of 1950s-style red/blue anaglyph 3D glasses…