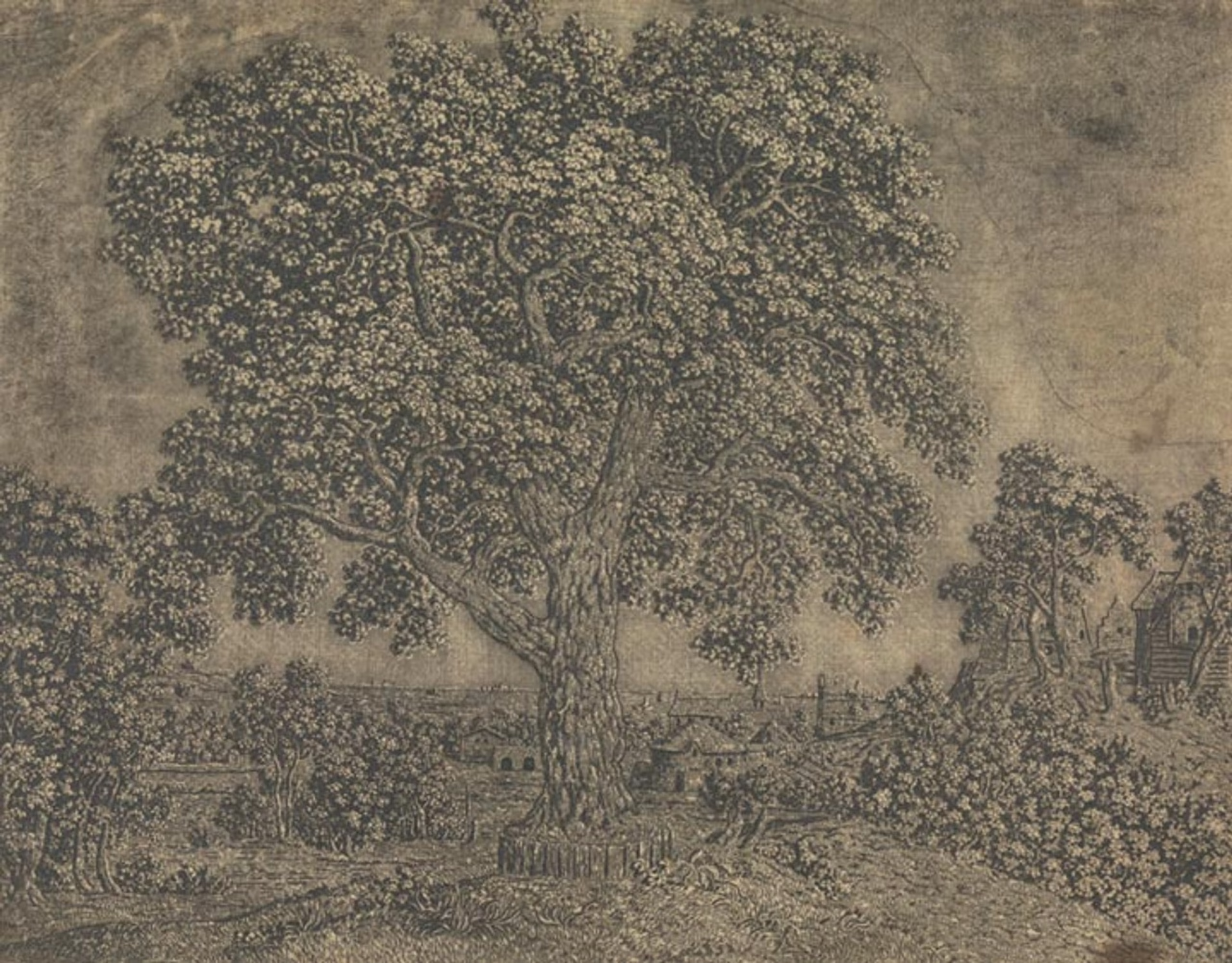

Early 17th Century painter and etcher Hercules Segers (also spelled Seghers) made some ghostly, shimmery prints in addition to his landscape paintings that seem to prefigure the Romantics and their sublimity by a good hundred years. He was also a pioneer of a number of etching techniques, from sugar-bite aquatint to a three-tone advanced plate preparation process that evidently no one else used before or since:

The pronounced diagonal hatching that we see in the detail at left maintains traces of the original layer of hatched lines that Segers applied to his printing plate. At that stage, he also applied a solution of animal fat mixed with oil or pine resin dissolved in turpentine, now known as stopping-out varnish. Segers applied this varnish to the areas where he wanted to create white highlights, evidenced here in the areas of the print that have no lines. The varnish once applied would protect those parts of the copper printing plate from being bitten by the etching acid.

As a long time Photoshop user, I still think a lot about the image histogram that represents the tonal range and variations of a digital image file, which artists use to adjust, mask, preserve, and work in the highlights, shadows, and midtones — it feels like Seger was capturing this idea but with the intricacies of the etching process, long before photography was a concern.

The quote above is from The Met’s writeup of Seger’s process, which also talks about Rembrandt’s admiration for the earlier artist’s work, and links to other examples of his impressions and unique “printed paintings”.