What a nicely-designed resource for information about color theory along with some material about its use in UX and data visualization!

Tag: color

-

An Interactive Guide to Color & Contrast

-

OKLCH in CSS: why we moved from RGB and HSL

Color nerdery ahead: I’ve been a fan of the CIELAB color space ever since I discovered Lab mode in Photoshop 20-ish years ago — it’s so awesome and useful to be able to manipulate color channels separate from luminosity! — and so I’m thrilled that web design is heading that direction as well with the new OKLCH color space in CSS Color 4.

This article from Evil Martians about why they’ve made the switch to OKLCH is a great read on the ins and outs of the new color space and why you should consider using it over the more familiar ancient standards. The TL;DR: unlike hexadecimal or RGBa values, Lab/LCH is much easier to read and adjust directly in CSS as well: want to make a color more saturated? Just adjust the middle value, chroma! Oh, and contrast is preserved between different colors so long as the Luminosity remains the same, which makes conforming to the WCAG-compliant color contrast accessibility guidelines that much easier.

I also learned from this in-depth article that Adobe Photoshop has adopted the OKLab space as a “perceptual” option when generating color gradients. Look at how ugly that “classic” gradient is in their screenshot! Gradients in Photoshop have always been messed up, so this is a pretty huge change that they’ve made.

-

The Realities And Myths Of Contrast And Color

From Andrew Somers, a great primer on how color vision works and how illuminated display technology maps perception to luminance contrast, color gamut, etc. Especially useful is his writeup of not only WCAG 2’s limitations for determining proper contrast for meeting accessibility needs but also the upcoming standards like APAC (Accessible Perceptual Contrast Algorithm) that will pave the way for more useful and relevant a11y standards.

-

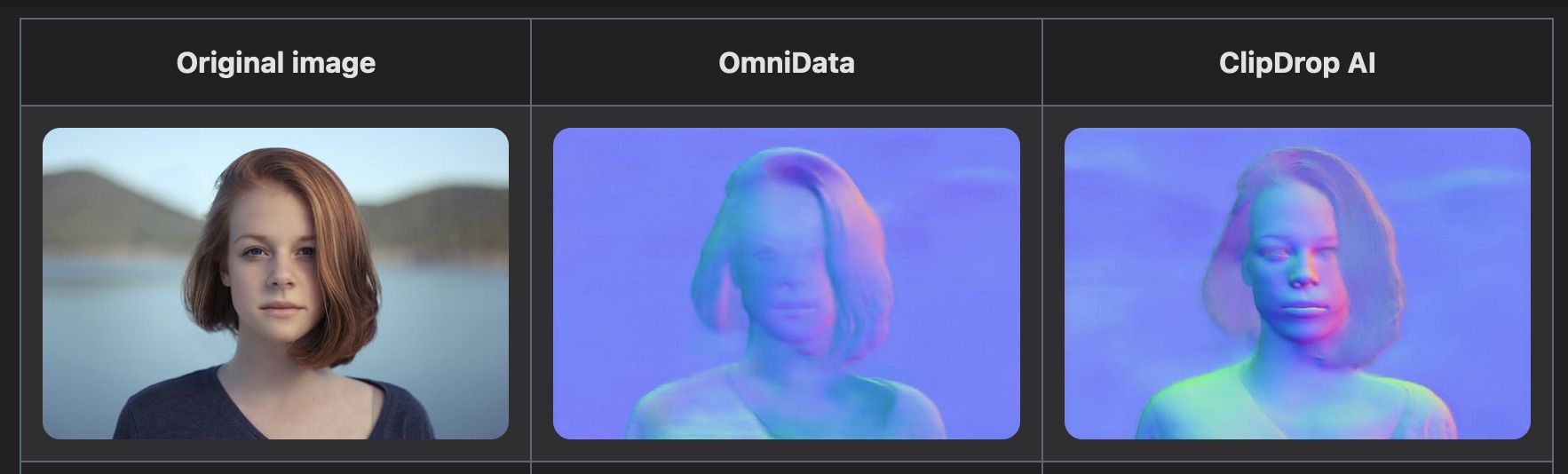

ClipDrop: AI for Image Relighting

This is a compelling use of AI for photographic manipulation (in my mind more practical than many of the other AI image generation examples that are flooding the art websites these days): basically the software can analyze a photograph, use AI to generate a pretty accurate depth map of the subject of the photo, and then use that for dynamic relighting (allowing you to add different artificial lights, color gels, etc.). You can try the web-based demo on your own photos! Neat.

-

Colors: Where did they go?

A nice write-up on color grading in films, especially after the 1990s advent of digital intermediates and LUTs — or to say it more clearly, Why do movies all look like that these days??

-

Why we’re blind to the color blue

If you’re super nerdy and experienced with using Photoshop’s individual color channels to make enhancements or custom masks, you might have noticed that the blue channel has very little influence on the overall sharpness of an RGB image — it never occurred to me that this is inherently a function of our own human eyesight, which is unable to properly focus on blue light, in comparison to other wavelengths!

I like how the paper from the Journal of Optometry that this article links to straight up dunks on our questionably-designed eyeballs:

In conclusion, the optical system of the eye seems to combine smart design principles with outstanding flaws. […] The corneal ellipsoid shows a superb optical quality on axis, but in addition to astigmatism, it is misaligned, deformed and displaced with respect to the pupil. All these “flaws” do contribute to deteriorate the final optical quality of the cornea. Somehow, there could have been an opportunity (in the evolution) to have much better quality, but this was irreparably lost.

This also made me wonder if this blue-blurriness has anything to do with the theory that cultures around the world and through history tend to develop words for colors in a specific order, with words for “blue” appearing relatively late in a language’s development. Evidently that’s likely so, because hard-to-distinguish colors take longer to identify and classify, even for test subjects who have existing words for them!

-

Random Numbers Through a Quantum Vacuum

Your random number generator not truly random enough for you? Maybe you should try some of the numbers coming off of the Australian National University’s quantum vacuum randomization server. Nothing like minute variations in a field of near-silence to get some unfettered randomness, I guess. They offer access to the vacuum through a few different forms of data – seen above is a chunk of their randomly-colored pixel stream. Science!

(Via Science Daily)

-

Herman Melville on the Nature of Color

From Moby Dick, chapter 42, “The Whiteness of the Whale”:

Is it that by its indefiniteness it shadows forth the heartless voids and immensities of the universe, and thus stabs us from behind with the thought of annihilation, when beholding the white depths of the milky way? Or is it, that as in essence whiteness is not so much a color as the visible absence of color, and at the same time the concrete of all colors; is it for these reasons that there is such a dumb blankness, full of meaning, in a wide landscape of snows – a colorless, all-color of atheism from which we shrink? And when we consider that other theory of the natural philosophers, that all other earthly hues – every stately or lovely emblazoning – the sweet tinges of sunset skies and woods; yea, and the gilded velvets of butterflies, and the butterfly cheeks of young girls; all these are but subtile deceits, not actually inherent in substances, but only laid on from without; so that all deified Nature absolutely paints like the harlot, whose allurements cover nothing but the charnel-house within; and when we proceed further, and consider that the mystical cosmetic which produces every one of her hues, the great principle of light, for ever remains white or colorless in itself, and if operating without medium upon matter, would touch all objects, even tulips and roses, with its own blank tinge – pondering all this, the palsied universe lies before us a leper; and like wilful travellers in Lapland, who refuse to wear colored and coloring glasses upon their eyes, so the wretched infidel gazes himself blind at the monumental white shroud that wraps all the prospect around him. And of all these things the Albino Whale was the symbol. Wonder ye then at the fiery hunt?

-

26 Terabit-per-second Laser

Researchers at the Karlsruhe Institute of Technology set a new record by transmitting 26 terabits of a data per second (“the entire Library of Congress in 10 seconds!” as the usual benchmark goes) using a single laser and a clever FFT and frequency comb technique to split the light into 300+ discrete colors:

The Fourier transform is a well-known mathematical trick that can in essence extract the different colours from an input beam, based solely on the times that the different parts of the beam arrive. The team does this optically – rather than mathematically, which at these data rates would be impossible – by splitting the incoming beam into different paths that arrive at different times, recombining them on a detector. In this way, stringing together all the data in the different colours turns into the simpler problem of organising data that essentially arrive at different times.

Neat.

(Via ACM TechNews)

-

Physicists break color barrier for sending, receiving photons

To be filed under “research I like reading about even if I don’t quite understand how it works”, new studies from the University of Oregon into altering and controlling the color of light on the scale of individual photons in fiber optic signalling:

In experiments led by Raymer’s doctoral student Hayden J. McGuinness, researchers used two lasers to create an intense burst of dual-color light, which when focused into the same optical fiber carrying a single photon of a distinct color causes that photon to change to a new color. This occurs through a process known as Bragg scattering, whereby a small amount of energy is exchanged between the laser light and the single photon, causing its color to change. […]

“In the first study, we worked with one photon at a time with two laser bursts to change the energy and color without using hydrogen molecules,” he said. “In the second study, we took advantage of vibrating molecules inside the fiber interacting with different light beams. This is a way of using one strong laser of a particular color and producing many colors, from blue to green to yellow to red to infrared.”

The laser pulse used was 200 picoseconds long. A picosecond is one-trillionth of a second. Combining the produced light colors in such a fiber could create pulses 200,000 times shorter – a femtosecond (one quadrillionth of a second).

(Via ACM TechNews)