What a nicely-designed resource for information about color theory along with some material about its use in UX and data visualization!

Tag: visualization

-

An Interactive Guide to Color & Contrast

-

What Do You See when You Read

In this way we are backwards phrenologists, we readers. Extrapolating physiques from minds.

From Jacket Mechanical’s nice mini-essay on the difficulties of visualizing characters from novels, how our minds fill in the textual lacunae with broad brushstrokes of personality over literal physical features.

“Call me Ishmael.” What happens when you read this line? You are being addressed, but by whom? Chances are you hear the line (in your mind’s ear) before you picture the speaker. I can hear Ishmael’s words more clearly than I can see his face. (Audition requires different neurological processes than vision, or smell. And I would submit that we hear more when we read than we see). Picturing Ishmael requires a strong resolve.

(Via Coudal Partners)

-

The Deleted City

The Deleted City, an installation that lets visitors explore the virtual ‘homesteads’ of Geocities.com, the most popular gathering place on the 1990’s WWW. For those not familiar, the site made it easy for the average person to set up a basic website (tacky graphics and all), and then group it into a ‘neighborhood’ based on the site’s presumed subject matter.

The installation is an interactive visualisation of the 650 gigabyte Geocities backup made by the Archive Team on October 27, 2009. It depicts the file system as a city map, spatially arranging the different neighbourhoods and individual lots based on the number of files they contain.

In full view, the map is a datavisualisation showing the relative sizes of the different neighbourhoods. While zooming in, more and more detail becomes visible, eventually showing invididual html pages and the images they contain. While browsing, nearby MIDI files are played.

I love the choice of music for this demo video.

-

Anonymizing the photographer

Via New Scientist, research into an image processing technique designed to mask the actual physical position of the photographer, by creating an interpolated photograph from an artificial vantage point:

The technology was conceived in September 2007, when the Burmese junta began arresting people who had taken photos of the violence meted out by police against pro-democracy protestors, many of whom were monks. “Burmese government agents video-recorded the protests and analysed the footage to identify people with cameras,” says security engineer Shishir Nagaraja of the Indraprastha Institute of Information Technology in Delhi, India. By checking the perspective of pictures subsequently published on the internet, the agents worked out who was responsible for them. …

The images can come from more than one source: what’s important is that they are taken at around the same time of a reasonably static scene from different viewing angles. Software then examines the pictures and generates a 3D “depth map” of the scene. Next, the user chooses an arbitrary viewing angle for a photo they want to post online.

Interesting stuff, but lots to contemplate here. Does an artificially-constructed photograph like this carry the same weight as a “straight” digital image? How often is an individual able to round up a multitude of photos taken of the same scene at the same time, without too much action occurring between each shot? What happens if this technique implicates a bystander who happened to be standing in the “new” camera’s position?

-

Yukikaze

-

Structured Light

Real-time 3D capture at 60fps using a cheap webcam and simple projected pattern of light points. The structured-light code is open source, looks like a pretty cool project.

(Via Make)

-

Sonar

Sonar from Renaud Hallée on Vimeo.

Sonar by Renaud Hallée. Hypnotic music visualization (keyframe animated rather than generative, though). Reminds me of a cross between a backwards Osu! Tatakae! Ouendan and my favorite NASA video of all time, the Huygens Probe Descent Camera.

(Via Kitsune Noir)

-

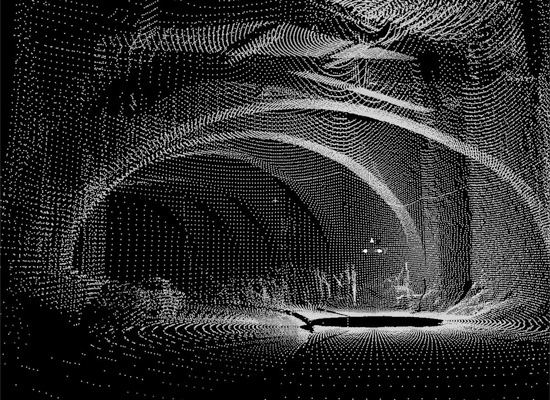

Laser Mapped Caves

La Subterranea, a research project laser-mapping out 2km worth of the caves and tunnels running beneath Guanajuato, Mexico.

(Via Make)

-

Rapid Prototyping with Ceramics

If you’re the sort of lab that’s engineering a method of printing ceramic materials using rapid prototyping machines, I suppose it’d make sense that you’d already have made some real-life polygonal Utah teapots! I never thought about it before, but for the 3D graphics humor value I really, really want one of these now. You can read about the Utanalog project and see finished photos (and a video explaining the whole thing) over on the Unfold blog.

-

Pretend to Be Radiohead with This Point Cloud Instructable

Pretend to be Radiohead with this Instructable guide to 3D light scanning using a projector, camera, and a bit of Processing! This is designed to create the visualization seen in the video above, but you could also use the point data for output on a 3D printer, animation package, etc. Neat.

(Via Make)